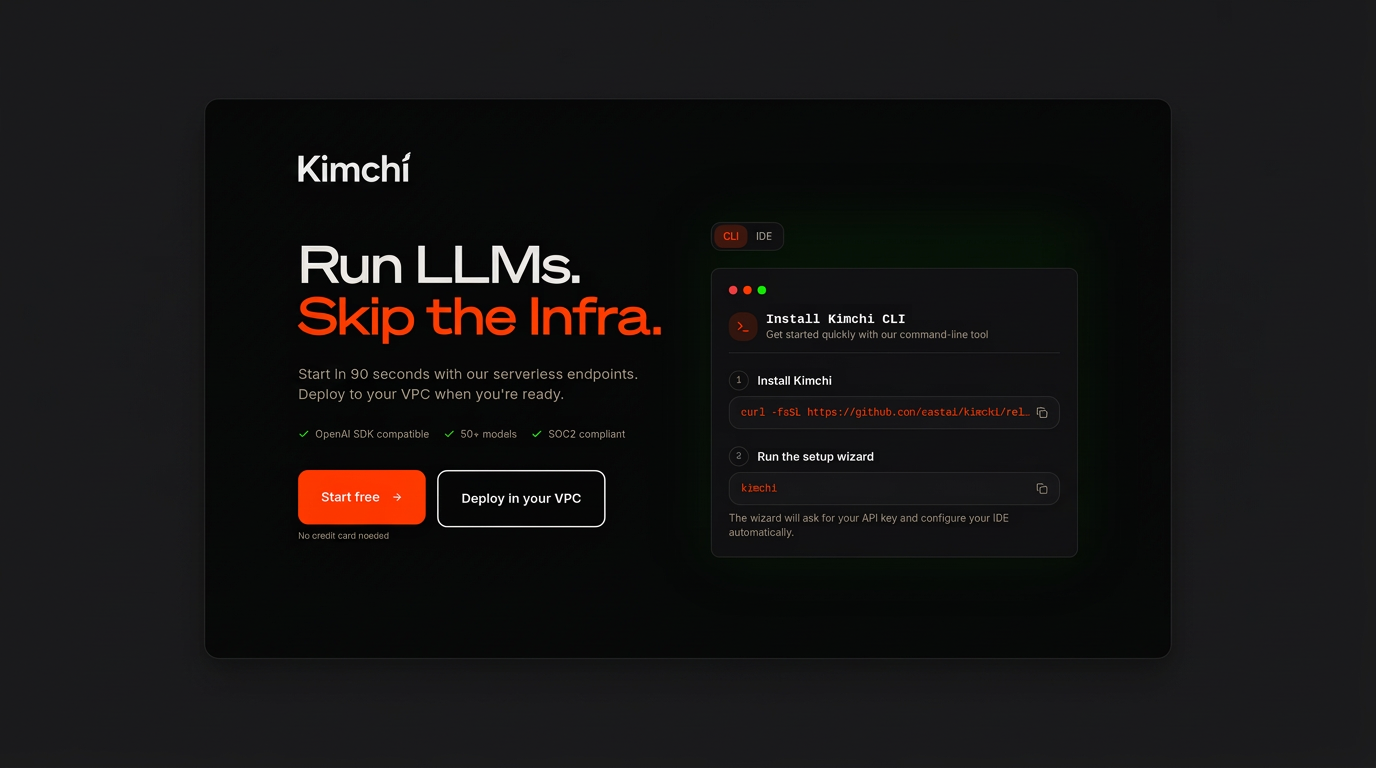

Kimchi

Private AI inference, built to scale

Upvote this product

Product Features

Explore what Kimchi has to offer

Click to expand

About Kimchi

Kimchi.dev is a managed AI inference platform that enables engineering teams to build, test, and deploy AI applications quickly using a unified OpenAI-compatible API. It removes the operational burden of managing infrastructure such as GPUs, model hosting, scaling, and deployment pipelines, allowing developers to focus on building product features and delivering value. The platform is designed to support the full lifecycle of AI development—from early experimentation to enterprise-scale production. Teams can start in a fully managed environment for rapid prototyping and iteration, then seamlessly transition to secure, production-ready deployments inside their own Virtual Private Cloud (VPC). This ensures full control over data, security, and compliance while maintaining a consistent API interface and requiring no changes to existing integrations. Kimchi.dev includes built-in governance and observability from day one, providing visibility into usage across engineers, teams, and projects. This enables organizations to track costs, monitor resource consumption, and maintain accountability as AI adoption scales across the business. Under the hood, the platform abstracts away all infrastructure complexity. It handles GPU provisioning, model serving, autoscaling, failover, and zero-downtime updates automatically. This ensures reliable performance at any scale without requiring dedicated DevOps or MLOps engineering effort for inference workloads. As demand grows, teams can scale into dedicated GPU capacity within their VPC while keeping APIs and workflows unchanged. By combining managed simplicity with private infrastructure control, Kimchi.dev delivers a faster, more secure, and more efficient way to build and operate AI-powered applications from prototype to production.

Leave a review

No review yet.